Open show | Winter 2024 | OCAD University

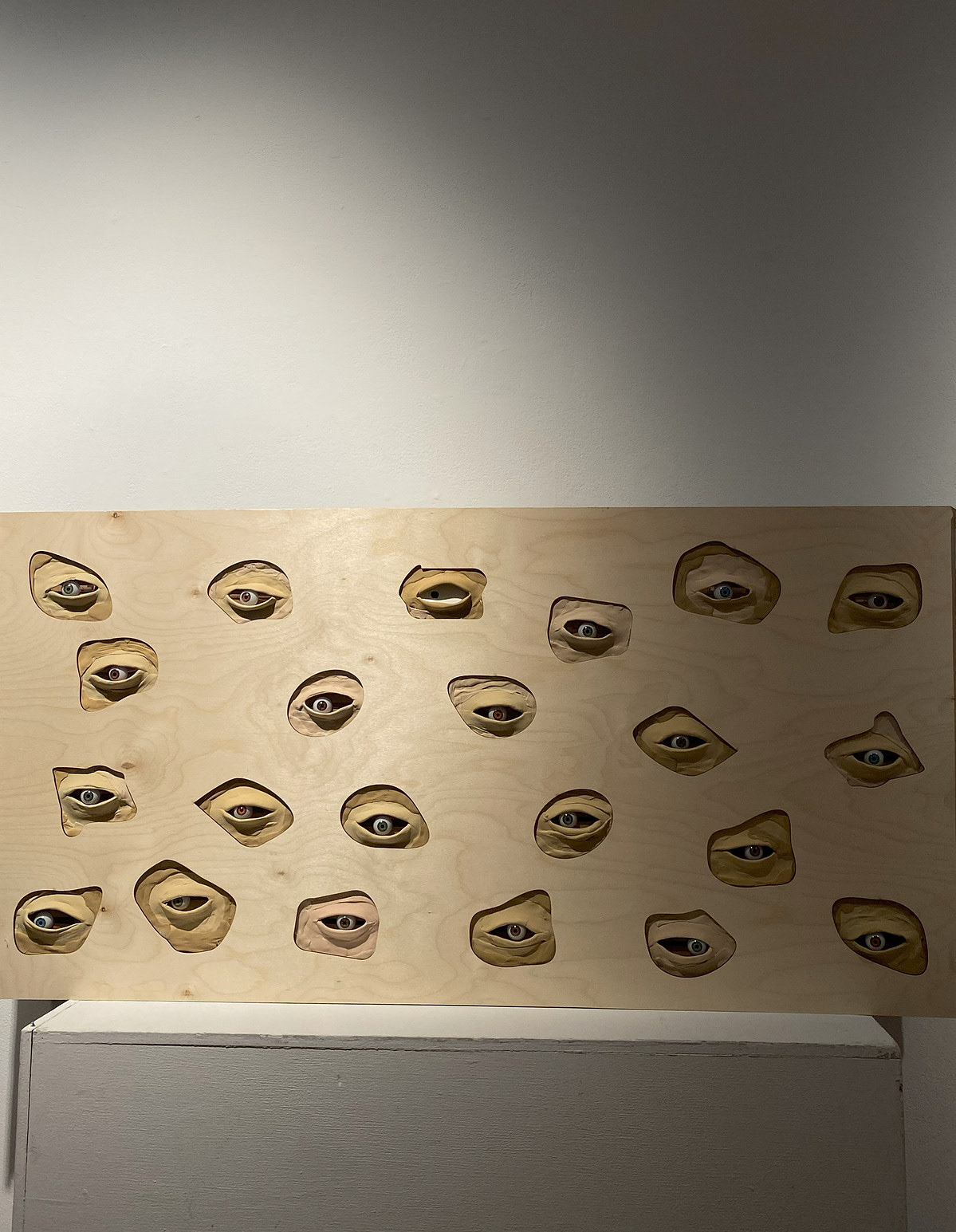

Gazing, an insidious, invasive phenomenon, often brings with it unseen pressures and voyeuristic discomfort. This interactive installation, Gazed, explores the unsettling concept of being watched, embodying a hidden yet intrusive form of scrutiny that many encounter in daily life. A gaze can range from curious to invasive, imposing an invisible tension on those it targets. Our project replicates this uncanny sensation through an installation of “eyes” that silently observe the viewer, creating a presence that is both captivating and unnerving.

Technical Implementation

The installation is built using a wooden board as the main structure, with cardboard for support. An Arduino Nano 33 IoT serves as the central control unit, paired with two servo motors, each controlling 11 eyes to move in sync.

The mechanics involve custom arms attached to each servo, connecting to a central shaft. From these shafts, bamboo sticks link each eye, allowing a single servo to move multiple eyes simultaneously. A webcam is used for tracking, integrated with p5.js and the ml5.js library to detect viewers’ shoulder positions.

The eyelids were crafted with air-dry clay and colored to blend with the wooden board, giving the eyes a subtle, realistic look.

Circuit Diagram

The webcam captures the viewer’s real-time movements, sending data to p5.js for processing. p5.js then transmits this data to an Arduino Nano board, which controls two servo motors that guide the movement of the eyes in the installation. This circuit diagram illustrates the sequential connection between the Arduino Nano and the servo motors, showing how data flows through each component to create a responsive interaction.

Final Code

This code is designed to control servo motors based on a person’s shoulder positions detected from a camera feed. It maps the detected shoulder positions to corresponding servo angles, which are then sent to an Arduino to control the movement of connected servos. The drawTarget() function visually marks the detected shoulder positions with a pulsing effect, measureDistance() calculates the distance between two points (shoulders), and sendDataToArduino() sends the computed servo angles to the Arduino, allowing the servos to adjust in real-time according to the person’s pose.